Most small teams don’t have a data problem — they have a manual collection bottleneck that an AI web scraping tool can permanently eliminate.

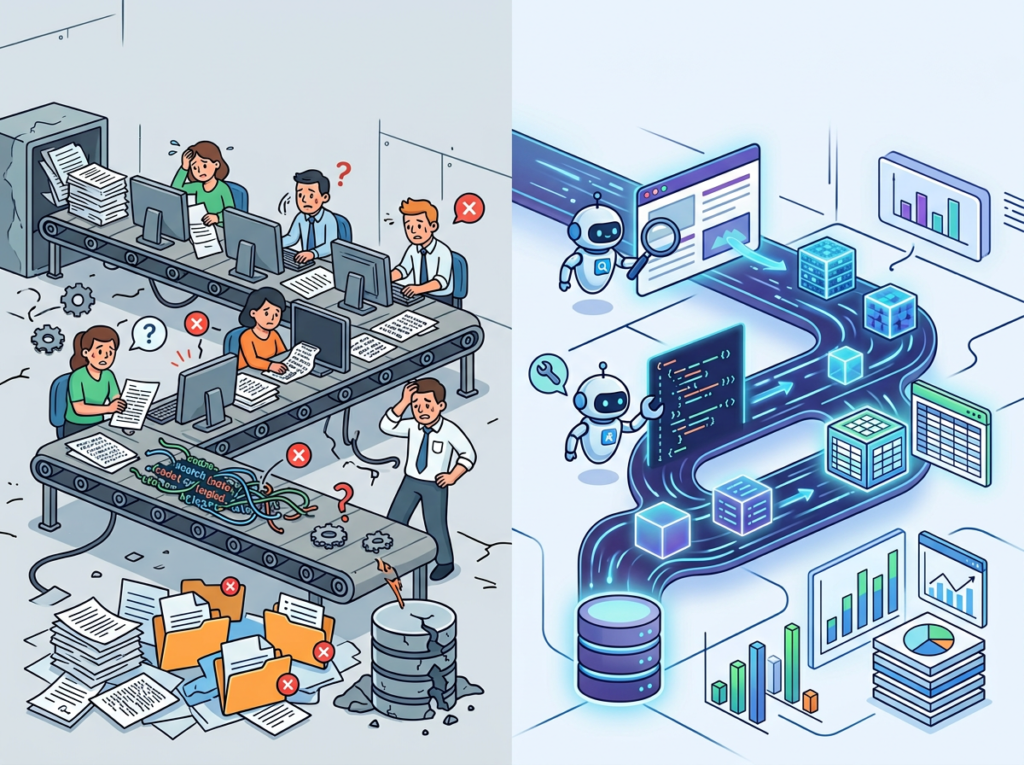

In 2026, American small businesses are drowning in data they can’t efficiently collect. Web pricing tables need constant monitoring. Competitor product listings shift weekly. Lead databases go stale the moment they’re compiled. For a lean team of three to ten people, keeping up with this volume of web data using manual methods isn’t just inefficient — it’s a strategic liability.

The problem isn’t ambition. US founders are building smarter, leaner operations than ever before. The real friction sits upstream: every workflow that depends on fresh web data eventually stalls because someone on the team has to go get it by hand. A marketing lead spends two hours each Monday pulling competitor prices into a spreadsheet. A research analyst refreshes government regulation pages twice a week looking for updates. A sales ops manager manually compiles a contact list from six different directories — a task that kills an entire Friday afternoon.

This is the state of web data collection for most US small teams in 2026: chaotic, manual, and quietly expensive. At a US labor cost of $50–$100 per hour, repetitive web data tasks that consume even five hours per week translate to $13,000–$26,000 in annual labor spend — before accounting for errors, missed updates, or employee burnout from mind-numbing work.

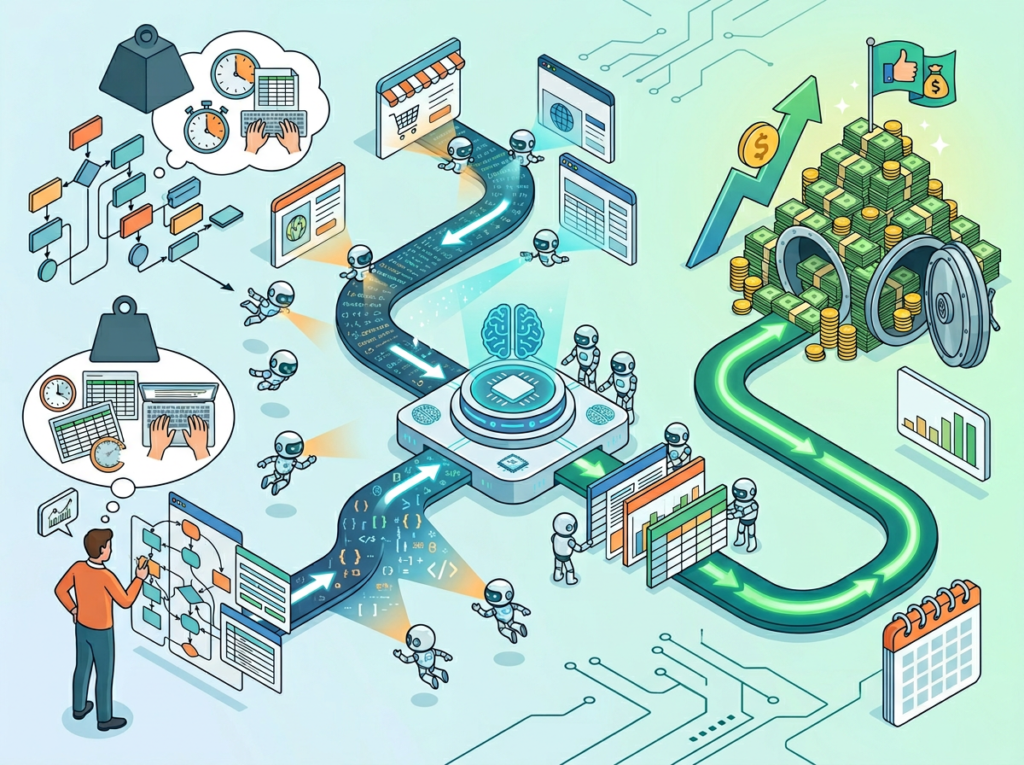

Reworkd AI entered this landscape with a clear proposition: automate the entire web data pipeline, end-to-end, without requiring your team to write or maintain a single line of scraping code. Unlike traditional documentation software or productivity suites, Reworkd was purpose-built for data extraction at scale — letting small teams deploy AI agents that find, extract, validate, and deliver structured web data on autopilot.

This article examines how Reworkd AI fits into the Solo DX framework for US small teams, breaks down its core capabilities by team role, and gives you the exact workflow map to systemize your data collection operations — so your team stops manually pulling information and starts acting on it.

Ready to eliminate manual web data collection from your US team’s workflow in under a week? Try Reworkd AI Free

What Is Solo DX?

Solo DX — Small-Scale Digital Transformation — describes the operational shift that happens when a US founder moves from running everything personally to building systems that let a small team execute with consistency, speed, and minimal supervision. It’s not the enterprise ERP rollout. It’s not the $500,000 Salesforce implementation. Solo DX is what happens when a founder with five employees decides to stop being the single point of failure in their own business.

Corporate SOP methodology — the kind taught in MBA programs and deployed at Fortune 500 companies — consistently fails US SMBs for one simple reason: it assumes dedicated operations staff. A seven-person e-commerce company doesn’t have a Director of Process Improvement. A four-person market research firm doesn’t have a data infrastructure team. What they have is a founder wearing six hats and a small, talented group of generalists who are already stretched thin.

Solo DX acknowledges this reality and asks a different question: how do we build systems that work at this scale, with these people, starting this week?

To understand where data collection fits in the Solo DX picture, it helps to distinguish it from related AI categories:

| Category | Focus | Solo DX Relevance |

|---|---|---|

| AI Efficiency | Individual task speed | Moderate — personal productivity gains |

| AI Revenue Boost | Sales and marketing AI | Moderate — growth tooling |

| Solo DX | Team-wide systems and repeatable workflows | High — operational foundation |

| AI Workflows | Automation pipelines | High — complements Solo DX |

Web data collection sits squarely in the Solo DX column because its bottleneck is structural, not individual. It doesn’t matter how productive each person on your team is if your data pipelines require constant human attention to function. Reworkd AI attacks this structural bottleneck directly.

Consider a real-world example: a three-person market research studio based in Austin needed to track pricing data across 40 competitor websites for an ongoing client engagement. Before deploying an AI web scraping tool, one analyst spent approximately 12 hours per week manually pulling and formatting this data — nearly a third of their productive capacity. That’s the Solo DX gap: a workflow that works, but only because a human is manually running it on repeat.

Explore Reworkd AI’s features to understand how AI-driven extraction agents can replace this kind of repetitive, high-frequency data task across your entire team’s operation.

Why an AI Web Scraping Tool Is Key for Mini-Team Systemization

Problem 1: Knowledge and data live in manual processes, not systems.

On most small teams, web data collection isn’t a system — it’s a habit. One person knows which sites to check. Another has the spreadsheet template. A third has the login credentials for the data portal. When that person is out, on vacation, or leaves the company, the data flow stops. US labor turnover sits at approximately 47% annually, meaning nearly half your team could change within 12 months. If your data workflows live inside people’s heads instead of documented, automated systems, every hire and departure is an operational disruption.

Problem 2: Data quality degrades when humans are the pipeline.

Manual data collection is inconsistent by nature. Different team members format cells differently. Someone forgets to check a source one week. A competitor changes their pricing page layout and the tracker breaks silently. Small teams rarely have QA processes for their own internal data workflows, which means the decisions being made downstream are frequently based on data that’s incomplete, stale, or incorrectly formatted.

The AI Solution: End-to-End Pipeline Automation

An automated web scraping AI like Reworkd replaces all three failure modes simultaneously. Instead of a person periodically checking websites, an AI agent continuously monitors target pages, extracts the relevant data fields, validates the output, and delivers structured results — in JSON, CSV, or directly into your existing tools — without any manual intervention between cycles.

The cost comparison is stark:

- Manual data collection: $13,000–$26,000+ per year in US labor, with high error rates and zero scalability

- AI-assisted collection: Handled within existing subscription overhead, runs continuously, self-heals when source websites change

For US small businesses where every dollar and every hour matters, this isn’t an incremental improvement. It’s an operational category change — the difference between a workflow that depends on a person and a system that runs whether or not that person shows up.

According to this analysis of AI-powered web scraping platforms, the most important capability shift in 2026 is the move from brittle, script-based scrapers to autonomous agents that self-heal when website layouts change — exactly the problem Reworkd was engineered to solve. As this guide to autonomous scraping agents confirms, AI scraping has become essential for any team that depends on fresh competitive or market data to drive decisions.

How Reworkd AI Enables Solo DX

1. AI-Generated Extraction Agents to $2,000–$4,000 Saved Per Setup Cycle

Traditional web scraping requires a developer to write custom code for each target site. At US developer rates of $100–$150 per hour, building even a modest multi-site scraping infrastructure costs $2,000–$4,000 in upfront engineering time — before the maintenance clock starts ticking.

Reworkd AI replaces this entirely. You describe what data you want in plain language, and Reworkd’s AI agents analyze the page structure, generate extraction code, run it, and deliver structured output. No developer required. A non-technical team member can have a functional extractor running within minutes.

For a five-person team needing data from a dozen sources, that’s a one-time savings of $24,000–$48,000 in developer time redirected toward product, marketing, or operations.

2. Self-Healing Scrapers to Elimination of Ongoing Maintenance Costs

The hidden cost of traditional web scraping isn’t setup — it’s upkeep. Websites redesign their layouts. They add anti-bot measures. They paginate differently. Every change breaks the existing scraper and requires manual intervention to fix.

Reworkd’s self-healing architecture monitors for changes in website structure, detects extraction failures, and automatically repairs the underlying logic on the fly. For a small team running 20–50 active extractors across competitor sites, industry directories, and public data sources, eliminating manual maintenance can recover 5–8 hours per month in developer or analyst time.

At a US technical labor rate of $100 per hour, that’s $6,000–$9,600 per year in recovered capacity — time that was previously spent on maintenance that produced zero new value.

3. No-Code Pipeline Management to $6,000/Year Saved in Ops Overhead

Because Reworkd is built for non-technical operators, your team’s business analysts, marketing leads, and ops managers can build, modify, and monitor data pipelines without engineering support. When a competitor launches a new product page, the marketing lead can add it to the extraction queue directly — no ticket, no sprint, no waiting.

This reduction in internal handoff delays and cross-functional coordination overhead translates to approximately 2 hours per week per team member involved in data-dependent workflows — roughly $6,000 annually for a team of three across mixed salary levels.

See how Reworkd AI works in practice before evaluating whether it fits your team’s specific data collection use cases.

Ready to eliminate manual web data collection from your US team’s workflow in under a week? Try Reworkd AI Free | No credit card required | Trusted by data-driven US teams

Use Cases by Team Role

Persona 1:Startup Founder Juggling Competitive Intelligence

The situation: Maria runs a six-person SaaS startup in San Francisco. Her company competes in a fast-moving market where pricing, feature sets, and positioning shifts among competitors can happen weekly. She had been manually checking seven competitor websites every Monday morning — a 90-minute ritual that frequently got pushed to Tuesday or Wednesday when things got busy.

Old workflow: Maria opens each competitor’s pricing page, copies the current plan structure into a shared Google Sheet, notes any changes in a Slack message, and hopes the marketing lead sees it before preparing that week’s sales materials. Total time: 90 minutes per week. Total annual cost: approximately $11,700 in founder time at $150/hour.

AI-powered workflow with Reworkd: Maria sets up extraction agents targeting all seven competitor pricing pages. Agents run nightly, extract current pricing tiers and feature lists, and output a structured comparison table to a shared dashboard. A Slack notification fires automatically when any competitor changes their pricing. Maria reviews a one-page summary on Monday morning in under 10 minutes.

Quantified results: 80-minute weekly time savings, $10,000+ in annual founder time recovered, 100% consistency in data freshness regardless of how busy the week gets.

“I used to start every Monday playing catch-up. Now the competitive landscape is already waiting for me, structured and current, before I’ve finished my first coffee.” — Maria, SaaS Founder, San Francisco

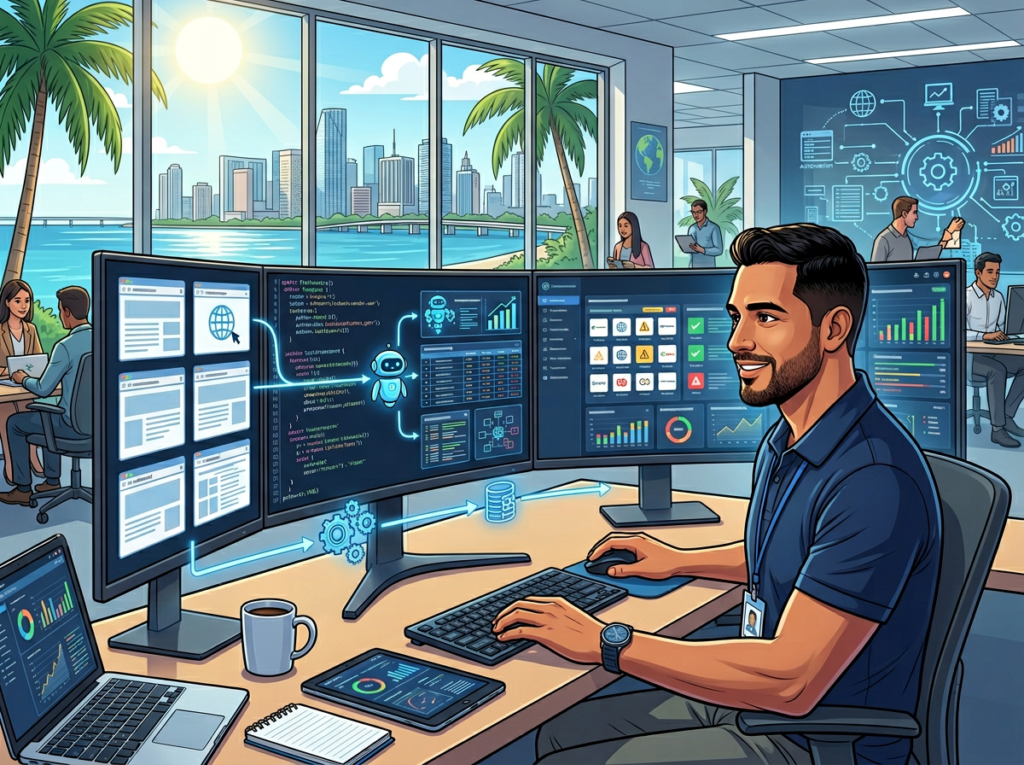

Persona 2: Operations Lead Managing Vendor and Compliance Data

The situation: James is the operations lead for a seven-person logistics consulting firm in Miami. A significant portion of his workflow involves monitoring public government procurement databases, tracking regulatory updates across three federal agencies, and maintaining a current vendor directory from industry association pages — all of which change frequently and unpredictably.

Old workflow: James manually checks 12 different government and association websites weekly, copying relevant entries into a compliance tracking spreadsheet. The process takes approximately 6 hours per week, and it’s impossible to guarantee completeness — websites he visits on Monday may update on Thursday.

AI-powered workflow with Reworkd: James deploys extraction agents against all 12 target sources, configured to pull new regulation entries, updated compliance deadlines, and new vendor listings daily. Outputs feed into a structured database his entire team can query. Alerts trigger when new entries match predefined criteria.

Quantified results: 5.5 hours per week recovered ($17,160 annually at $60/hour), zero missed updates due to manual checking gaps, full team access to current data replacing a single-person bottleneck.

“The compliance tracking piece alone justified the entire investment. I’m not the bottleneck anymore — the system is always running.” — James, Operations Lead, Miami

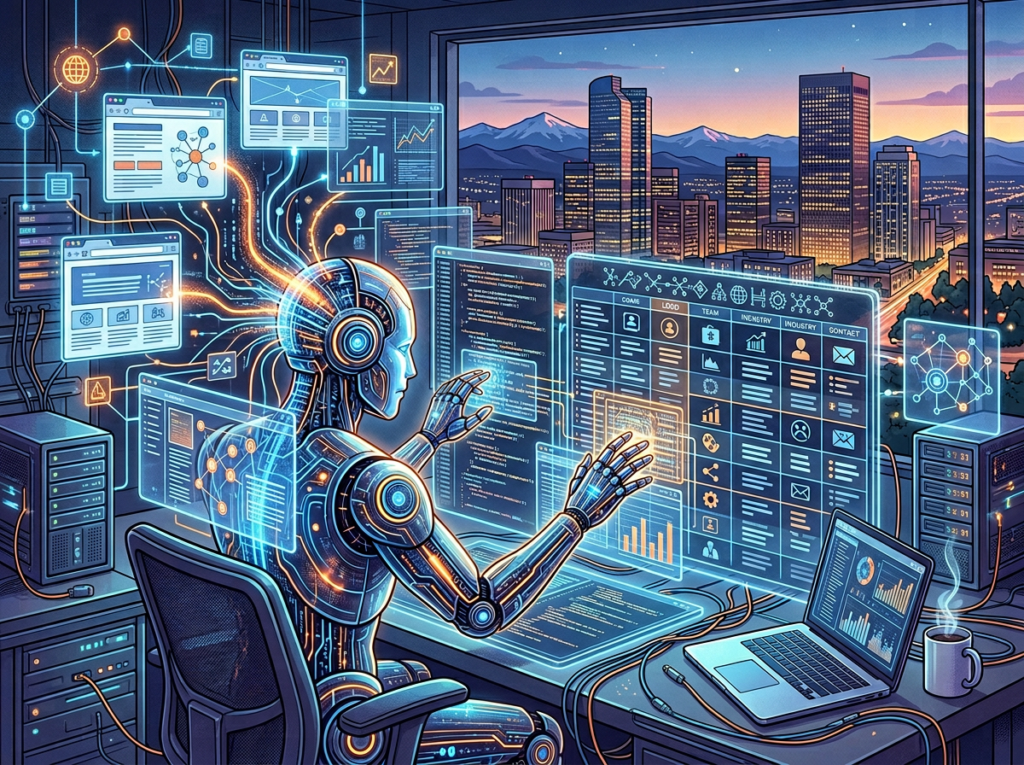

Persona 3: Research Analyst Building Lead and Industry Databases

The situation: Robert is a research analyst at a three-person consulting firm in Denver. A core part of his value to the firm is building curated databases of industry contacts, market participants, and published research. Compiling these from directories and association pages was consuming the majority of his billable week.

Old workflow: Robert spends 15–20 hours per week manually navigating directories, copying contact details, and formatting entries — highly repetitive work that required his time but not his judgment.

AI-powered workflow with Reworkd: Robert configures targeted extraction agents for the six primary directories his firm relies on. Agents collect structured records — company name, contact details, location, industry category — and output clean, deduplicated data that imports directly into the firm’s CRM.

Quantified results: 12–15 hours per week recovered ($37,440–$46,800 annually at $60/hour), 3x increase in database volume per research cycle, analyst time redirected to higher-value synthesis work.

“The work I used to do in a full week, the system now handles overnight. I spend my time on the analysis that actually requires thinking.” — Robert, Research Analyst, Denver

Discover Reworkd AI and see which of these use cases maps most closely to your team’s current data collection bottlenecks.

Join data-driven US small teams using Reworkd AI to eliminate manual web collection and scale their operations. See How It Works | Used by teams from Silicon Valley to New York

Common Pitfalls & How to Avoid Them

Pitfall 1: Scraping Everything Instead of the Right Things

The accessibility of no-code extraction can lead teams to over-index on data volume. Teams deploy agents against dozens of sources, accumulate vast amounts of raw data, and then discover they have no workflow for acting on any of it. The result is a data warehouse that consumes storage and generates alerts nobody reads.

The fix: Start with one workflow. Identify the single data collection task that costs your team the most time each week, automate that first, and build a clear downstream process for how the extracted data gets used before adding additional sources.

Pitfall 2: Ignoring Validation Outputs and Assuming Accuracy

As noted in this comprehensive overview of AI web scraping in 2026, even sophisticated AI extraction systems can encounter silent failures when source websites change in unexpected ways. Teams that set-and-forget their extraction pipelines sometimes discover weeks later that a subset of their data has been silently malformed or incomplete.

The fix: Build a weekly 10-minute review into your team’s routine to scan extraction dashboards for anomalies, failure rates, and unexpected output patterns. This is not a substitute for automation — it’s the lightweight oversight layer that keeps automation reliable.

Pitfall 3: Using Reworkd in Isolation Instead of Connecting It to Your Existing Tools

Extracted data that lives only inside Reworkd’s dashboard hasn’t been fully operationalized. Its value is fully realized when it flows directly into the tools your team already uses — CRMs, project management platforms, reporting dashboards, or even a well-structured shared spreadsheet.

The fix: Treat your Reworkd outputs as upstream inputs to existing workflows, not as endpoints in themselves. Map the path from extraction to decision before you launch each new agent.

Get a detailed breakdown of Reworkd AI to understand how its output formats and integration options align with your existing tool stack.

FAQs

What’s the difference between AI Efficiency and Solo DX?

AI Efficiency focuses on making individual contributors faster — think AI writing assistants and personal task managers. Solo DX focuses on team-level systems that let multiple people execute consistent workflows reliably. An AI web scraping tool is a Solo DX solution because it replaces a team-wide process bottleneck, not just an individual task.

Can small teams afford to use AI data extraction tools?

Yes — in most cases, the ROI turns positive within the first month. If your team spends even three hours per week on manual data collection at a blended US labor cost of $65/hour, that’s nearly $10,000 per year for a single workflow. Most AI extraction tools cost a fraction of that annually.

Is Reworkd AI hard to set up?

Reworkd was built for non-technical operators. You specify target URLs and desired data fields in plain language, and AI agents handle extraction logic, code generation, and maintenance without any technical configuration. Most users have their first functional extractor running within a standard business day.

Conclusion

In 2026, American small businesses don’t need enterprise budgets to build enterprise-level data infrastructure. The gap between a three-person team manually collecting web data and one running fully automated AI-powered pipelines is no longer a technology gap — it’s a systems decision.

Reworkd AI represents the Solo DX approach applied to web data collection: take the workflow costing your team the most repetitive time, automate it completely, and redirect that capacity toward work that actually moves the business forward.

The ROI case is straightforward. If a single team member spends five hours per week on manual data collection — pulling competitor pricing, monitoring regulatory updates, compiling lead databases — that’s a $15,000–$20,000 annual labor cost for one workflow. An AI web scraping tool eliminates that cost while delivering more consistent, more current, and more scalable data than any manual process can match.

Start with one process. Identify the one web data task your team performs most repeatedly, and systemize it this week. The operational confidence that comes from knowing that workflow runs reliably — without anyone manually running it — is the foundation every subsequent Solo DX improvement is built on.

Learn more about Reworkd AI and take the first step toward a data collection operation your team doesn’t have to babysit.

Leave a Reply