Small teams that learn faster than their competitors win — and in 2026, the best ai research tools make that gap measurable in hours and dollars.

There’s a moment every US small business founder knows. You’ve grown from a solo operator to a team of five or eight, and suddenly the chaos hits differently. Research is piling up in separate browser tabs. Your marketing lead is making decisions based on a competitor analysis that’s six months old. Your new hire in Denver is asking questions that already live in someone’s head in Chicago — but never made it into a shared document.

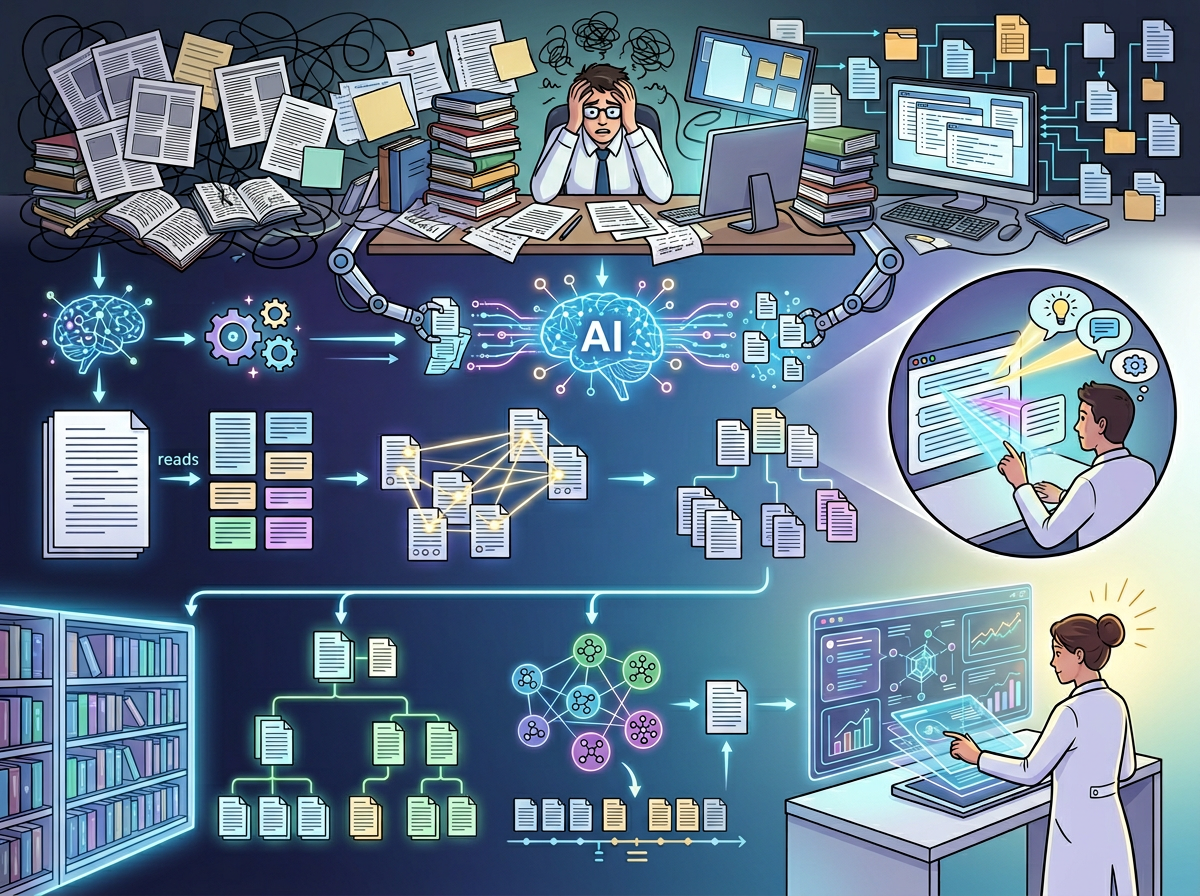

In 2026, knowledge fragmentation is the silent killer of small team momentum. The average US knowledge worker spends 2.5 hours per day searching for information, according to McKinsey — costing a 10-person team over $120,000 annually in lost productivity at US labor rates of $75–$100 per hour. Manual literature reviews and competitive research compound the problem further: a thorough market landscape analysis traditionally requires 15–20 hours of skilled labor at $5,000–$8,000 in consulting fees.

This is where AI research tools have moved from “nice to have” to operational necessity.

OpenRead is an AI-powered research platform that gives US small business teams access to over 300 million papers, reports, and documents — with built-in tools to summarize, interrogate, compare, and organize findings in a fraction of the traditional time. For founders managing 1–10 person teams, it functions not just as a research accelerator, but as a shared intelligence system: a living knowledge layer that helps your whole team make better decisions faster.

Unlike generic productivity apps that cost $5,000+ in US labor to implement properly, OpenRead’s paid plans start at $5–$20 per month. The ROI math is immediate and compelling.

This guide explains how OpenRead fits into the Solo DX framework — the small-scale digital transformation model built for US founders who are done running everything from memory and ready to build systems that scale.

What Is Solo DX?

Solo DX — Small-Scale Digital Transformation — describes the operational shift that happens when a US founder moves from doing everything themselves to building repeatable, AI-assisted systems that their growing team can actually run.

It’s not about enterprise software. It’s not about hiring an operations manager. It’s about using AI tools strategically to encode the founder’s knowledge, standardize team workflows, and reduce the decision-making bottlenecks that slow small businesses down at exactly the wrong moment.

Solo DX vs. Other AI Categories

| Category | Who It’s For | Primary Goal |

|---|---|---|

| Solo DX | Founders scaling 1–10 person teams | Systemize knowledge and operations |

| AI Efficiency | Individual contributors | Speed up personal task execution |

| AI Revenue Boost | Sales and marketing leads | Drive pipeline and conversion |

| AI Workflows | Operations-oriented teams | Automate repetitive process steps |

Most founders assume they have a productivity problem. Solo DX reframes it: the real problem is that institutional knowledge lives in the founder’s head and nowhere else. When your team of seven is making decisions based on incomplete information — or worse, asking you the same questions repeatedly — you don’t have a staffing problem. You have a knowledge infrastructure problem.

Corporate SOP methods fail US small businesses for a predictable reason: they were built for organizations with dedicated operations managers, legal review cycles, and six-month rollout windows. A three-person design studio in Austin can’t implement a Fortune 500 documentation system. But they can implement Solo DX.

Real example: A three-person brand strategy firm in Austin was spending eight hours per client engagement on competitor research and industry trend analysis. Each team member was pulling from different sources, creating inconsistent recommendations. With no shared research system, every client felt like starting from scratch. Solo DX applied here means using OpenRead to build a shared research workspace: a single intelligent layer where competitive analyses, industry papers, and strategic frameworks live — searchable, summarizable, and accessible to anyone on the team in minutes.

The core promise of Solo DX is simple: you shouldn’t have to be in every meeting, on every call, and in every document for your business to produce consistent, high-quality output.

Why AI Is Key for Mini-Team Systemization

Problem 1: Knowledge Lives in the Founder’s Head

In most 1–10 person US teams, the founder is the de facto research department. They track industry trends, monitor competitors, read relevant studies, and synthesize it all into strategy — then communicate fragments of that thinking in Slack messages, one-off emails, and ad hoc meetings.

This is functionally unmaintainable. When your team acts on secondhand founder knowledge, quality degrades. When you bring on a new hire, you start the knowledge transfer process from zero. When you’re out sick, decisions stall.

AI research tools break this bottleneck by making research a team-accessible system rather than a founder-exclusive function.

Problem 2: New Hires Slow Down Operations

US labor turnover sits at approximately 47% annually across industries. Every new hire represents weeks of knowledge transfer — which means every departing employee takes institutional intelligence with them. The average US cost of replacing an employee is $15,000–$25,000 when recruitment, onboarding, and productivity lag are included.

When your research processes are ad hoc and undocumented, new team members take 4–6 weeks to reach productive contribution. When those processes are systematized with AI tools — searchable workspaces, pre-built summaries, documented research frameworks — onboarding compresses to days.

Problem 3: Output Quality Varies Across Team Members

A small team without shared research infrastructure produces inconsistent output. Your senior content strategist in San Francisco and your junior analyst in Miami are building on different information bases, applying different standards, and delivering work that doesn’t feel like it came from the same company.

AI research tools create a consistent intelligence baseline across your entire team.

The Cost Reality

| Approach | Time | Cost (US Labor at $75/hr) |

|---|---|---|

| Manual competitive research per project | 15–20 hrs | $1,125–$1,500 |

| Manual literature review per topic | 10–15 hrs | $750–$1,125 |

| AI-assisted research with OpenRead | 1–3 hrs | $75–$225 + $5–$20/month subscription |

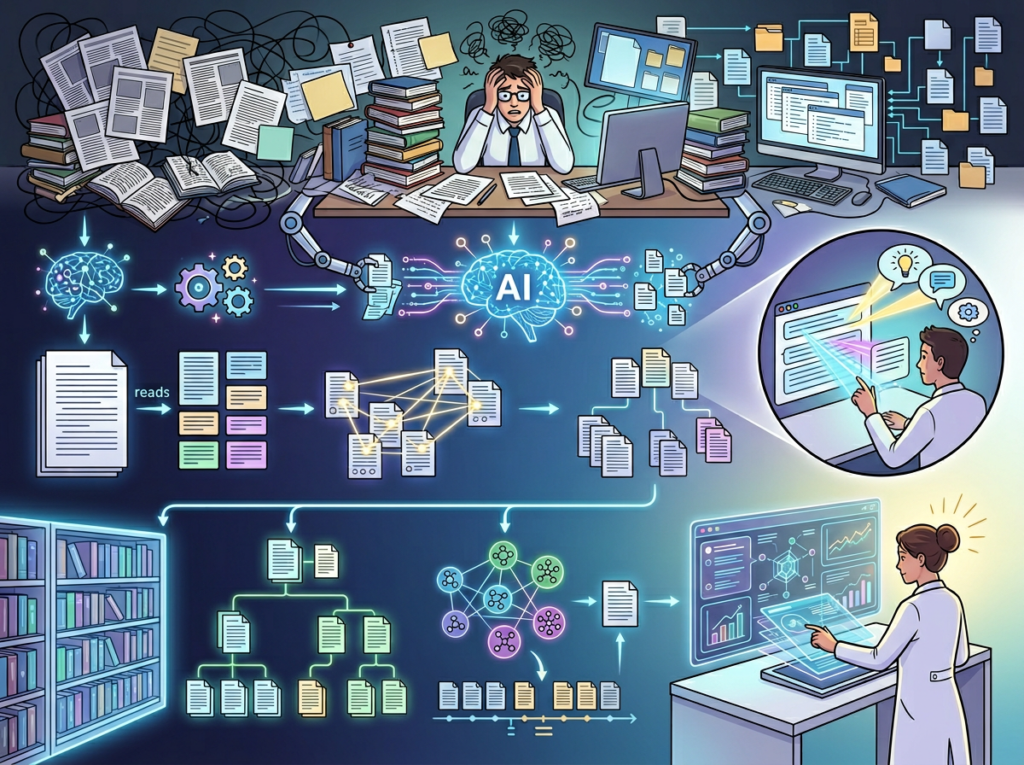

For a team running 4–6 research-dependent projects per month, the annual savings from AI research tools approach $40,000–$80,000 in recovered labor hours — before accounting for the quality improvements that come with consistent, well-sourced team intelligence. This breakdown of OpenRead’s interactive research model provides useful context on how AI-powered paper interaction differs from traditional research workflows.

How OpenRead Enables Solo DX

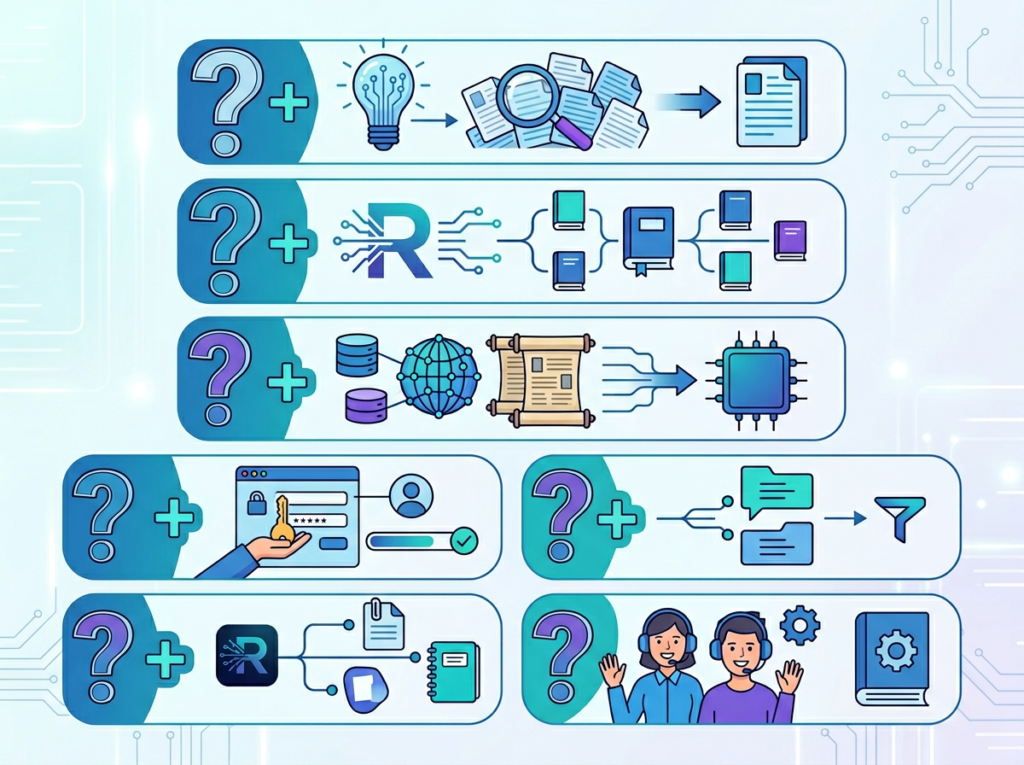

Feature 1: Paper Espresso — AI-Generated Research Summaries

Paper Espresso is OpenRead’s flagship summarization engine. Upload a PDF, paste a URL, or select from over 300 million indexed papers, and Paper Espresso generates a structured breakdown: key findings, methodology, implications, and limitations — in minutes, not hours.

Solo DX application: Instead of assigning a team member to read 12 industry reports before a strategy session, a founder in Chicago can run Paper Espresso across all 12 documents, producing a synthesized briefing document in under an hour. That briefing becomes a reusable team asset — not a one-time email attachment.

ROI: At a US labor rate of $85/hour, replacing 12 hours of manual reading with 45 minutes of AI-assisted summarization saves $935 per research cycle. For a team running monthly strategy sessions, that’s $11,220 per year in recovered hours.

Feature 2: Paper Q&A via Oat — Conversational Knowledge Retrieval

Oat, OpenRead’s AI assistant, lets team members ask direct questions about research documents and receive answers with source citations. Instead of skimming 40 pages to find one data point, a team member types a question and gets a referenced answer in seconds.

Solo DX application: A six-person consulting firm in Denver uses Oat to onboard new analysts. Instead of spending 20 hours reading the firm’s research library, new hires interact with documents conversationally — asking questions, getting cited answers, building context at their own pace.

ROI: Reducing analyst onboarding research time from 20 hours to 6 hours saves $1,190 per hire at $85/hour. With a 47% annual turnover rate in knowledge work, a 6-person team cycling through 2–3 researchers per year captures $2,380–$3,570 annually in onboarding efficiency.

Feature 3: Collaborative Research Workspaces

OpenRead supports real-time collaboration across team members — shared document libraries, joint annotation, and synchronized research threads. This converts individual research activities into team-accessible knowledge assets that persist beyond any single project.

Solo DX application: A New York-based PR agency creates a shared OpenRead workspace for each client vertical. Every team member working on a fintech client contributes to a shared research pool — articles, papers, competitor analyses — that any colleague can search, summarize, or reference in real time.

ROI: Eliminating duplicated research across a 4-person team (where each person independently finds 2–3 overlapping sources per project) saves 4–6 hours per project cycle. At $80/hour, that’s $320–$480 per project, or $15,360–$23,040 annually across a 40-project workload.

As explored in this OpenRead overview, these features work together to create a research infrastructure that scales with your team — not one that requires you to hire a dedicated researcher to manage.

Ready to systemize your US team’s research operations in under a week? Try OpenRead Free | No credit card required | Trusted by researchers and teams across the US

Use Cases by Team Role

1. Startup Founder Juggling 3 Departments — Maria, San Francisco

The situation: Maria runs a 7-person climate tech startup in San Francisco. She’s simultaneously managing investor relations, product development, and market positioning — all of which require current research on regulatory changes, competitor activity, and scientific developments in carbon capture.

Old workflow: Maria spent 6–8 hours per week scanning newsletters, downloading papers, and sharing Slack summaries that her team half-read. Research quality depended entirely on how much time she could carve out. New developments were routinely missed for 2–3 weeks.

OpenRead workflow: Maria sets up a shared OpenRead workspace with search alerts across her key topic areas. Paper Espresso auto-summarizes new relevant papers as they’re indexed. Her team reviews structured briefings in 15 minutes rather than reading raw documents. Oat answers ad hoc questions from team members without Maria’s involvement.

Results: Weekly research time drops from 7 hours to 90 minutes. Team decision confidence improves because everyone is working from the same current intelligence. Maria recovers 22 hours per month — equivalent to $2,750 in executive labor at her $125/hour consultant equivalent rate.

Maria: “I used to be the only person who actually read the research. Now it’s a team function. My product lead references papers I didn’t even know about.”

2. Research Analyst Building Competitive Intelligence — Robert, New York

The situation: Robert is a senior analyst at a 6-person strategy consultancy in New York. Each client engagement requires synthesizing 20–30 sources into a coherent competitive intelligence report — a process that was taking 20–25 hours per engagement and often produced duplicated effort when two consultants unknowingly researched the same source.

Old workflow: Robert and colleagues maintained separate research folders, emailed each other PDF links, and routinely duplicated work. Final synthesis required a 4-hour working session to reconcile different research threads into a single narrative.

OpenRead workspace: Robert creates shared client workspaces in OpenRead. All team members contribute to one research pool. Paper Compare generates structured cross-source analyses. Oat allows any team member to query the full document library conversationally. The 4-hour synthesis session is replaced by a 45-minute collaborative review.

Results: Per-engagement research time drops from 22 hours (distributed) to 10 hours. Duplicated effort is eliminated. The firm completes 20% more engagements per quarter without additional headcount. At $150/hour senior analyst rates, the per-engagement savings total $1,800 — or $21,600 per year across 12 annual client engagements. This review of OpenRead’s collaborative features provides additional context on how the platform handles multi-user research workflows.

Learn more in the full OpenRead review on AI Plaza to understand how these collaborative research features work in practice.

Robert: “We stopped having the ‘wait, you read that too?’ conversation. Everything goes into the workspace, and everyone can search it.”

Join thousands of US small teams using OpenRead to build sharper research systems. See How It Works | Used by teams from Silicon Valley to New York

Common Pitfalls & How to Avoid Them

Mistake 1: Treating OpenRead as an Individual Tool Instead of a Team System

The single biggest waste of an AI research platform is using it in isolation. Founders who use OpenRead only for their own research get a personal efficiency boost. Teams that build shared workspaces with consistent naming conventions, organized document libraries, and collaborative annotation processes get a systemization return.

Fix: Assign one team member as the “workspace owner” in your first month. Define folder structures and document tagging conventions before everyone starts uploading. A 30-minute setup meeting prevents 6 months of disorganized research sprawl.

Mistake 2: Delegating Research Without Documenting the Output

AI-assisted research is only valuable if the insights survive beyond the project that generated them. Teams that run a Paper Espresso summary, act on the findings, and then let the document sit in a folder have extracted 20% of the available value. The remaining 80% is in reuse.

Fix: Build a lightweight “research log” in your OpenRead workspace — a running document that captures key findings, decision dates, and outcome notes. Future team members inherit a living knowledge base, not a pile of PDFs.

Mistake 3: Using AI Output Without Human Review

OpenRead’s AI tools are accurate and well-cited, but no AI system is infallible. Teams that treat AI-generated summaries as final truth — without spot-checking sources or applying domain judgment — risk building strategy on flawed foundations.

Fix: Build a two-step review into your research workflow: AI generates the first-pass synthesis, a team member with domain knowledge validates the key claims before the output is shared with stakeholders. This takes 20–30 minutes and catches 90% of edge cases. See the detailed breakdown of OpenRead for notes on how source citations are structured for easier human verification.

FAQs

What is Solo DX?

Solo DX (Small-Scale Digital Transformation) is the operational framework for US founders scaling 1–10 person teams. It focuses on using AI tools to encode founder knowledge, systemize repeatable processes, and reduce the operational bottlenecks that block team performance — without enterprise-level complexity or cost.

How can AI research tools help my team make better decisions?

AI research tools like OpenRead give every team member access to the same synthesized, current intelligence — regardless of seniority or time availability. When decisions are grounded in shared, well-sourced research rather than individual Googling or secondhand founder knowledge, both the quality and consistency of outcomes improve. Teams using shared AI research platforms report 30–40% reductions in decision latency.

What’s the difference between AI Efficiency and Solo DX?

AI Efficiency tools help individuals work faster on their personal tasks — writing, scheduling, data entry. Solo DX is specifically about team-level systemization: building shared knowledge infrastructure, standardizing workflows across roles, and reducing founder dependency. OpenRead operates at the team systemization level when deployed as a shared workspace.

Conclusion

In 2026, American small businesses don’t need enterprise budgets to build enterprise-level research systems. They need the right AI research tools, applied consistently, as a team rather than as individuals.

OpenRead gives US founders and team leads a practical path from research chaos to research infrastructure. Paper Espresso turns days of reading into hours of synthesis. Oat converts document libraries into searchable, conversational knowledge. Paper Compare turns multi-source confusion into clear, cited comparison. Collaborative workspaces turn individual research into shared team intelligence.

The Solo DX principle is simple: systems scale, people don’t. Every hour your team spends duplicating research, explaining things that should be documented, or making decisions on outdated information is an hour that could be recovered through intentional AI-assisted systemization.

Start with one research workflow. Pick your next competitive analysis, market overview, or industry briefing, and run it through OpenRead instead of manually. Measure the time saved. Then build from there.

The teams winning in 2026 aren’t necessarily the ones with the biggest budgets — they’re the ones who made their collective knowledge accessible, searchable, and reusable. That’s what good ai research tools actually deliver.

Get the full breakdown at our OpenRead tool page and see how it fits your team’s specific research workflow.

Leave a Reply