Open source AI coding models are rewriting how small dev teams compete — and Code Llama 70B is the one giving scrappy teams enterprise-grade output without the enterprise price tag.

If your engineering team is spending more time hunting down undocumented logic, re-explaining architecture decisions in Slack, or waiting for one senior dev to unblock everyone else — you don’t have a hiring problem. You have a systems problem. And in 2026, that problem is costing American small teams real money.

The average US software developer earns between $120,000 and $160,000 annually. When those developers spend 30–40% of their time on repetitive, low-complexity coding tasks — boilerplate, documentation, test generation, code reviews — you’re burning $36,000 to $64,000 per developer per year on work that an open source AI coding model for developers can handle in seconds.

Remote-first engineering teams across Austin, Denver, Chicago, and the Bay Area are facing the same growing pain: they’ve scaled beyond one or two co-founders, but they haven’t built the systems to match. Knowledge lives in one senior developer’s head. New hires take three to six weeks to become productive. Code quality varies wildly depending on who wrote it and when. Pull requests pile up because no one documented the decision-making process behind the codebase.

This is exactly the operational chaos that Solo DX is designed to solve — and Code Llama 70B is one of the most powerful tools small development teams can use to get there.

Unlike traditional documentation approaches that can cost $5,000 or more in US labor just to produce a basic SOP set, Code Llama 70B is an open-weight model that can be self-hosted, fine-tuned, and deployed on your own infrastructure. It was built specifically for code-related tasks: generation, completion, debugging, explanation, and test writing. For a small team with a tight budget and an ambitious shipping schedule, that’s not a nice-to-have. It’s a competitive advantage.

This guide walks through exactly how Code Llama 70B enables small US development teams to systemize their workflows, reduce bottlenecks, and ship faster — without adding headcount.

Join thousands of US small development teams using Code Llama 70B to eliminate operational bottlenecks. See How It Works

What is Solo DX?

Solo DX stands for Small-scale Digital Transformation — the process of building repeatable, documented, AI-assisted systems within a small team, led by a founder or team lead without access to a dedicated operations manager or enterprise IT department.

It’s a category distinct from general AI productivity tools. Where AI Efficiency focuses on individual-level output gains, Solo DX is about the operational layer: knowledge capture, process documentation, repeatable workflows, and team-wide consistency. The goal isn’t just to make one developer faster. It’s to build systems that make the whole team faster — and keep working even when key people are unavailable.

How Solo DX compares to other AI categories:

| Category | Focus | Who Benefits | Primary Outcome |

|---|---|---|---|

| Solo DX | Team systems & workflows | Founders, team leads | Repeatable operations |

| AI Efficiency | Individual output | Freelancers, solo operators | Personal productivity |

| AI Revenue Boost | Sales & marketing automation | Growth teams | More pipeline |

| AI Workflows | Process automation | Ops-heavy teams | Reduced manual steps |

Corporate SOP methodologies — the kind used by Fortune 500 companies — fail for US small teams for a predictable reason: they were designed for companies with dedicated process engineers, legal review teams, and months of runway to implement. A six-person dev shop in Austin or a bootstrapped SaaS team in Chicago doesn’t have that infrastructure. They need systems that can be built fast, iterated on quickly, and maintained without a full-time operations hire.

Consider a real-world example: a three-person product studio in Austin had all their deployment knowledge living inside one senior developer’s private notes. When that developer took two weeks off, the team was blocked on three client deliverables. There was no runbook. There was no documented process. There was no way to hand off the work without a two-hour call. That’s a Solo DX problem — and it’s one that Code Llama 70B is specifically positioned to solve.

By using an open source AI coding model for developers like Code Llama 70B, that Austin studio was able to generate deployment documentation, create annotated code explanations, and build internal Q&A tooling directly from their existing codebase — in under a week. You can explore Code Llama 70B’s features to understand exactly what capabilities are available for teams in this position.

The key insight of Solo DX is this: you don’t need more people to build better systems. You need the right AI tools and the discipline to use them consistently.

Why AI is Key for Mini-Team Systemization

American small development teams face three systemic problems that grow more expensive as the team grows. Each one has a direct AI solution — and a calculable cost if left unaddressed.

Problem 1: Knowledge Lives Only in the Founder’s (or Senior Dev’s) Head

In a two or three person team, this is manageable. By the time you hit five to eight people, it’s a crisis. The senior developer becomes the single point of failure for every architectural question, every deployment decision, every “why did we build it this way” conversation. That developer is now spending 10–15 hours per week in context-switching mode instead of shipping.

At a fully-loaded cost of $85–$120/hour for a senior US developer, that’s $44,200–$93,600 in annual cost just from knowledge-bottleneck overhead.

AI Solution: Code Llama 70B can be used to generate inline documentation, explain complex code in plain English, and produce contextual Q&A responses from existing codebases — turning tribal knowledge into searchable, usable assets.

Problem 2: New Hires Slow Down Operations

US labor turnover in the tech sector runs at roughly 13–18% annually in competitive markets. Every time a new developer joins the team, the ramp-up period — getting familiar with the codebase, understanding conventions, learning the deployment workflow — takes three to six weeks on average. During that time, the new hire is consuming senior developer time rather than contributing output.

That ramp-up period costs approximately $8,000–$18,000 per new hire in lost productivity and senior developer time, depending on team size and project complexity.

AI Solution: Code Llama 70B can generate onboarding documentation directly from a codebase, produce annotated walkthroughs of key modules, and answer developer questions in natural language — cutting ramp-up time by 40–60%.

Problem 3: Code Quality Varies Across Team Members

Without documented standards, code review guidelines, and shared conventions, quality becomes personality-dependent. Some developers write thorough tests. Others don’t. Some follow the architecture. Others improvise. The result is a codebase that becomes increasingly expensive to maintain.

AI Solution: Code Llama 70B can enforce consistent patterns by generating boilerplate, suggesting test cases, and flagging deviations from established conventions — acting as an always-available code standards engine.

The Cost Reality:

Manual systemization — hiring a technical writer, running documentation sprints, building onboarding materials from scratch — costs $5,000–$15,000 in US labor and takes four to eight weeks. With Code Llama 70B running on self-hosted infrastructure, the same output can be produced in hours, at a cost of $0 in subscription fees (open-weight model) plus modest compute costs.

Join thousands of US small development teams using Code Llama 70B to eliminate operational bottlenecks. See How It Works

How Code Llama 70B Enables Solo DX

Code Llama 70B is a code-specialized large language model released by Meta AI, built on the Llama 2 architecture and trained on 500 billion tokens of code and code-related data. The 70B parameter version is the most capable in the family, designed for tasks requiring deep code understanding: complex generation, multi-file reasoning, explanation, and fine-tuning on proprietary codebases.

Here’s how four specific capabilities map directly to Solo DX outcomes for small US development teams.

Feature 1: AI-Generated Code Documentation and SOPs

Code Llama 70B can take raw source code — functions, modules, entire files — and generate structured documentation, inline comments, and plain-English explanations suitable for onboarding materials or internal wikis.

ROI: A typical documentation sprint for a 10,000-line codebase costs $2,000–$4,000 in US labor at $80–$100/hour. With Code Llama 70B, the same documentation can be generated in 2–4 hours of prompt engineering and review work. Estimated savings: $2,000–$3,500 per documentation cycle.

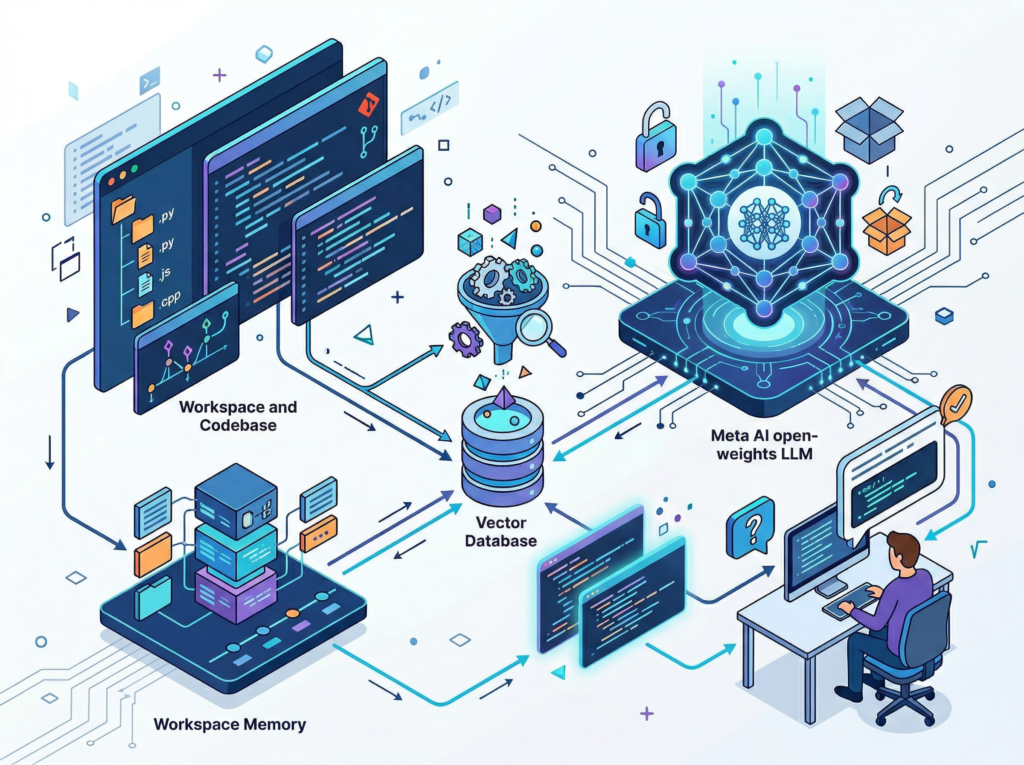

Feature 2: Codebase Q&A and Workspace Memory (via RAG Integration)

When Code Llama 70B is integrated with a retrieval-augmented generation (RAG) pipeline pointed at your codebase, it becomes a searchable knowledge base. Developers can ask natural language questions — “How does the authentication module handle token refresh?” — and receive accurate, context-aware answers without interrupting senior team members.

ROI: If this eliminates 5 interruptions per developer per week at 15 minutes each, and you have four developers at $80/hour: 4 developers × 5 questions × 0.25 hours × $80 × 50 weeks = $80,000 annually in recovered senior developer time.

Feature 3: Template and Boilerplate Automation

Repetitive code patterns — API wrappers, database schemas, component scaffolding — can be templated and generated on demand. Code Llama 70B can be fine-tuned on your own codebase conventions to produce boilerplate that already follows your team’s patterns.

ROI: Estimated time savings of 2–4 hours per week per developer on scaffolding work. At $80/hour with a team of four: $33,280–$66,560 annually in recovered development time.

See how Code Llama 70B works across all of these use cases, including deployment configurations and hardware requirements for self-hosting.

Ready to systemize your US team’s development workflow in under a week? Try Code Llama 70B Free | No credit card required | Trusted by development teams across the US

Use Cases by Team Role

Persona 1: US Startup Founder Juggling 3 Departments — Maria, San Francisco

Old Workflow: Maria is the CTO and de facto product lead of a six-person SaaS startup in San Francisco. Every architectural decision runs through her. New features get delayed because junior developers aren’t sure how to extend the existing data models without breaking something. She spends every Monday morning in “explanation mode” before writing a single line of code herself.

AI-Powered Workflow: Maria uses Code Llama 70B to generate a living architecture document from the codebase — one that answers common structural questions automatically. She sets up a lightweight RAG pipeline so developers can query the codebase directly. Architectural decisions are now logged as annotated code comments generated by the model.

Results: Maria recovered 8–10 hours per week of uninterrupted engineering time. Junior developers became self-sufficient within two weeks of onboarding. Estimated value: $62,400–$78,000 annually at her effective billing rate.

“It’s like having a senior engineer on call who’s read every line of our codebase and never forgets anything.” — Founder profile composite, SF Bay Area

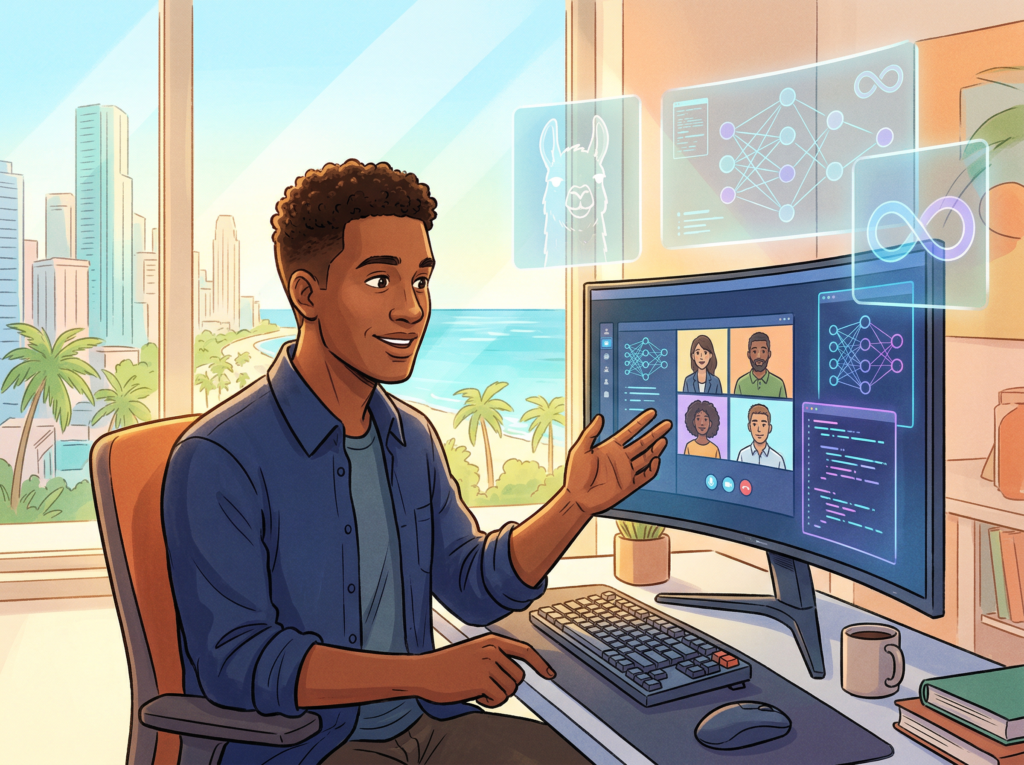

Persona 2: Engineering Lead Onboarding Remote Staff — James, Miami

Old Workflow: James runs engineering for a fully remote product team spread across Miami, Denver, and Chicago. Every new hire onboarding involves the same three-week process: a series of calls, a Notion doc that’s three versions out of date, and an informal “shadow a senior dev” period. It’s inconsistent, time-consuming, and dependent on whoever happens to be available.

AI-Powered Workflow: James uses Code Llama 70B to generate a structured onboarding guide directly from the codebase — annotated module walkthroughs, environment setup scripts, and a Q&A interface that answers new hire questions in real time. According to this breakdown of fine-tuning Code Llama 70B Instruct, fine-tuning on proprietary codebases is achievable even for teams without dedicated ML engineers.

Results: New hire ramp-up dropped from 4 weeks to 10 days. Senior developer onboarding time was cut by 70%. Estimated annual savings across three hires per year: $24,000–$54,000.

“We went from hoping new hires would figure it out to having a system that actually works every time.” — Engineering lead profile composite, remote team

Join thousands of US small development teams using Code Llama 70B to eliminate operational bottlenecks. See How It Works

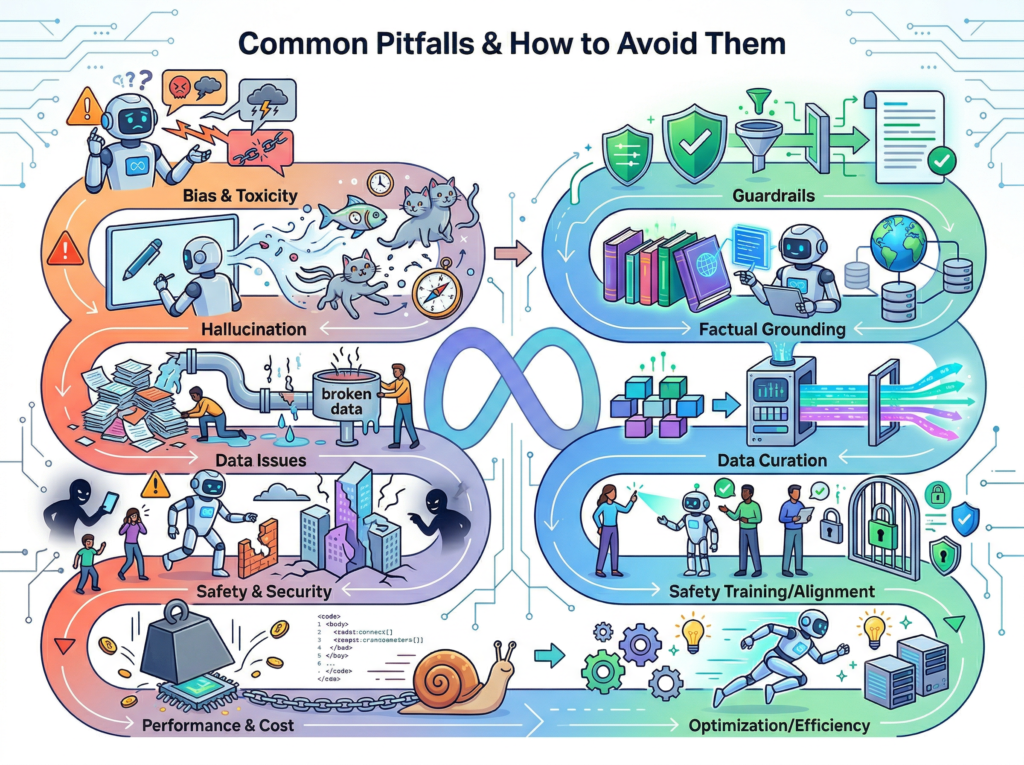

Common Pitfalls & How to Avoid Them

Even well-intentioned teams run into implementation problems. Here are the four most common mistakes US small development teams make when adopting an open source AI coding model for developers like Code Llama 70B — and how to avoid each one.

Pitfall 1: Using Too Many Disconnected Tools

Teams often layer Code Llama 70B on top of a fragmented stack — one tool for documentation, another for code review, another for onboarding — without integration. The result is a system that’s harder to maintain than the problem it was supposed to solve.

Fix: Start with one workflow. Pick the highest-pain bottleneck (usually documentation or onboarding) and build a complete system around it before expanding. Use Code Llama 70B as the core engine and keep the surrounding tooling minimal.

Pitfall 2: Failing to Review AI Output

Code Llama 70B is a powerful open source AI coding model for developers, but it produces output that requires human review — especially in security-sensitive contexts, complex business logic, and external API integrations. Teams that treat AI output as production-ready without review accumulate technical debt fast.

Fix: Build review checkpoints into your workflow from day one. Treat Code Llama 70B output the way you’d treat a junior developer’s first PR: review it, give feedback, and iterate. A detailed breakdown of Code Llama 70B covers the model’s known limitations and recommended validation practices.

Pitfall 3: Over-Relying on Slack and Email for Knowledge

Slack threads and email chains are knowledge graveyards. They’re searchable in theory and useless in practice. Teams that continue routing important technical decisions through chat instead of their AI-assisted knowledge base are building a system that leaks.

Fix: Establish a simple rule: any technical decision that would take more than 5 minutes to explain goes into the documented knowledge base, not a Slack message. Code Llama 70B can help draft those entries in under two minutes.

Join thousands of US small development teams using Code Llama 70B to eliminate operational bottlenecks. See How It Works

FAQs

What is Solo DX? Solo DX (Small-scale Digital Transformation) is the practice of building repeatable, AI-assisted operational systems within a small team, led by a founder or team lead without a dedicated operations manager. The goal is to create the same kind of systemized workflows that enterprise companies have — without the enterprise overhead.

How can AI write my code documentation and SOPs? Code Llama 70B can analyze your existing codebase and generate inline documentation, module-level READMEs, plain-English architecture explanations, and structured onboarding guides. You provide the code; the model produces the documentation. Most teams can get a first draft of their core documentation in a single afternoon.

What’s the difference between AI Efficiency and Solo DX? AI Efficiency focuses on making individual developers faster — better autocomplete, faster debugging, quicker lookups. Solo DX focuses on the operational layer: team-wide systems, shared knowledge bases, repeatable onboarding, and consistent code quality standards. Both matter, but Solo DX has a multiplier effect across the entire team.

Can small teams afford to use Code Llama 70B? Yes. Code Llama 70B is an open-weight model, which means you can self-host it without per-token API fees. Hardware costs vary depending on your setup — this guide to self-hosting 70B models affordably covers practical options ranging from cloud instances to on-premises GPU servers. For many small teams, the total compute cost runs $50–$300/month — a fraction of the labor cost it offsets.

Is Code Llama 70B hard to set up? Setup complexity depends on your infrastructure. Running the model locally requires a GPU with at least 40GB VRAM (for full precision) or quantized configurations that run on more modest hardware. Cloud deployment via providers like AWS, GCP, or Azure is straightforward and documented. For teams without ML engineering experience, using a managed inference endpoint is typically the fastest path to a working implementation.

Conclusion

In 2026, American small development teams don’t need enterprise budgets to build enterprise-level engineering systems. They need the right open source AI coding model for developers — and the discipline to deploy it around their actual operational bottlenecks.

Code Llama 70B offers something that most commercial AI coding tools don’t: the ability to self-host, fine-tune, and deeply integrate with your proprietary codebase without sending your code to a third-party API. For teams working with sensitive client data, proprietary algorithms, or compliance requirements, that’s not a minor feature. It’s a decisive one.

The Solo DX framework gives small teams a clear implementation path: start with the highest-pain bottleneck, build one system around it, prove the ROI, and expand. Whether that’s codebase documentation, new hire onboarding, test generation, or standards enforcement — Code Llama 70B provides the AI coding foundation to make it work.

Start with one process. Systemize it this week. The compounding value of documented, repeatable systems doesn’t show up on day one — but it shows up decisively by month three.

Learn more about Code Llama 70B and take the first step toward a development team that ships consistently, onboards fast, and stops losing institutional knowledge every time someone goes on vacation.

Leave a Reply